Table of Contents

AFQuery

Fast, file-based genomic allele frequency queries for large cohorts. No server, no cloud — just files.

AFQuery stores genotype data as Roaring Bitmaps in Parquet files and answers allele frequency queries in under 100 ms across large cohorts, with flexible filtering by sex, phenotype codes (arbitrary sample labels), and sequencing technology.

When to Use AFQuery

- You need allele frequencies for phenotype-defined subgroups (not just whole-population AF)

- You mix WGS, WES, and panels in one cohort and need technology-aware AN

- You require reproducible local AF computed on your own samples — not just public reference databases

- You run repeated queries on the same dataset (annotation, clinical interpretation, research)

- You need sub-100 ms query latency without database servers or cloud infrastructure

How It Works

AFQuery pre-indexes genotypes as Roaring Bitmaps in Parquet files. At query time, it intersects carrier bitmaps with eligible-sample bitmaps (determined by sex, phenotype, and capture filters) and counts bits — reducing each query to microsecond-scale bitmap operations with sub-100 ms end-to-end latency.

Features

- Sub-100 ms queries — bitmap operations, not VCF scanning. Latency is independent of cohort size.

- Incremental updates — add or remove samples without rebuilding the database.

- Multi-dimensional filtering — filter by sex, phenotype codes, and sequencing technology with include/exclude semantics.

- Server-less — a directory of Parquet files + SQLite. Copy to share, no daemon required.

- Ploidy-aware — correct AN on chrX PAR/non-PAR, chrY, and chrM.

- Technology-aware AN — per-position capture BED intersection across WGS, WES kits, and panels.

- Carrier lookup — list samples carrying any variant with full metadata (sex, tech, phenotypes, genotype, FILTER status).

- VCF annotation — add

AFQUERY_AC/AN/AF/N_HET/N_HOM_ALT/N_HOM_REF/N_FAIL/N_NO_COVERAGEINFO fields from any sample subset. - Audit changelog — every database operation is recorded for reproducibility.

Architecture

graph TD

A["🔍 Input VCFs<br/>single-sample"]

B["📥 Ingest<br/>cyvcf2 reads VCFs →<br/>per-sample Parquet files emitted"]

C["🏗️ Build<br/>DuckDB aggregates per bucket →<br/>Roaring Bitmaps → Parquet"]

D["💾 Database on Disk<br/>variants/chr*/bucket_*.parquet<br/>capture/*.pkl<br/>metadata.sqlite<br/>manifest.json"]

E["⚡ Query Engine<br/>Load bitmap → filter samples →<br/>compute AC/AN/AF<br/>~10-100ms"]

F["📊 Annotate"]

G["🔄 Update"]

A --> B

B --> C

C --> D

D --> E

E -.->|VCF| F

E -.->|Batch| G

G -.->|Updated| D

style A fill:#e1f5ff

style B fill:#fff3e0

style C fill:#f3e5f5

style D fill:#e8f5e9

style E fill:#fce4ec

style F fill:#fce4ec

style G fill:#fce4ecWhere to Start

graph TD

A["What do you want to do?"]

B["Build a database<br/>from VCFs"]

C["Query allele<br/>frequencies"]

D["Annotate a<br/>patient VCF"]

E["Classify variants<br/>using ACMG criteria"]

F["Compare AF across<br/>groups"]

M["Find carriers of<br/>a variant"]

A -->|First time| G["5-Min Quickstart"]

A -->|Build| B

A -->|Query| C

A -->|Annotate| D

A -->|Classify| E

A -->|Compare| F

A -->|Carriers| M

B --> H["Create a Database"]

C --> I["Query Guide"]

D --> J["Annotate a VCF"]

E --> K["ACMG Criteria"]

F --> L["Cohort Stratification"]

M --> N["Variant Info"]

click G "getting-started/quickstart/"

click H "guides/create-database/"

click I "guides/query/"

click J "guides/annotate-vcf/"

click K "use-cases/acmg-use-cases/"

click L "use-cases/cohort-stratification/"

click N "guides/variant-info/"

style A fill:#e3f2fd

style G fill:#e8f5e9Next Steps

- Why Local AF Matters — population gaps, technology bias, and the research behind AFQuery

- Installation — pip, conda, from source

- Quickstart — 5-minute end-to-end tutorial

- Key Concepts — bitmaps, Parquet, manifest, metadata model

Getting Started

Why Local Allele Frequencies Matter

Clinical Decisions Depend on Accurate AF

Allele frequency (AF) is one of the strongest lines of evidence in clinical variant classification. Under the ACMG/AMP framework, AF thresholds directly determine whether a variant is classified as benign (BA1: AF > 5%), supporting-pathogenic (PM2: absent or extremely rare), or strongly pathogenic through case enrichment (PS4). A misestimated AF can flip a classification — and with it, a clinical decision.

Yet AF is not an intrinsic property of a variant — it depends on the population in which it is measured. The same variant can be common in one cohort and absent in another. When the reference population does not match the patient's background, variant classification becomes unreliable.

This page describes four methodological gaps in current allele frequency workflows that AFQuery was designed to address.

Gap 1 — Global Databases Miss Population-Specific Signals

Population databases like gnomAD are invaluable for identifying common variants, but they aggregate data from broad, predominantly European-ancestry populations. When the patient's ancestry differs from the reference, AF estimates diverge — sometimes dramatically.

Real-world impact

Turkish breast cancer variants showed up to 354-fold higher allele frequencies in a local variome compared to gnomAD, leading to 6.7% of VUS being reclassified to likely benign when population-matched data were used (Agaoglu et al., 2024). In Taiwanese inherited retinal degeneration, using a local biobank as ancestry-matched controls for PS4 evidence upgraded 2 variants from LP to P and 6 from VUS to LP (Huang et al., 2026).

The problem extends beyond rare ancestries. In a UK arrhythmia clinic, 32.2% of VUS were reclassified upon re-evaluation with updated frequency data (Young et al., 2024). Analysis of 469,803 UK Biobank exomes found that 12.4% of rare LDLR VUS met criteria for reclassification to likely pathogenic when biobank-derived odds ratios were calibrated to ACMG PS4 strength levels (Bhat et al., 2025).

These are not edge cases. Every cohort with a population composition that differs from gnomAD — geographically, clinically, or by ascertainment — may produce misleading AF when compared only against global references.

| Study | Population | Key Finding |

|---|---|---|

| Agaoglu et al. 2024 | Turkish breast cancer | Up to 354× AF difference vs gnomAD; 6.7% VUS reclassified |

| Huang et al. 2026 | Taiwanese IRD | 8 variants reclassified using local biobank PS4 evidence |

| Young et al. 2024 | UK arrhythmia clinic | 32.2% of VUS reclassified on re-evaluation |

| Bhat et al. 2025 | UK Biobank (470K) | 12.4% rare LDLR VUS reclassifiable via biobank OR |

| Kotan 2022 | Turkish endocrinology | Population-matched variomes correlate best geographically |

| Soussi 2022 | Multi-ethnic (TP53) | 21 benign TP53 SNPs missed in European-biased databases |

| Dawood et al. 2024 | Multi-ethnic (MAVE) | AF evidence codes have inequitable impact on non-Europeans (p = 7.47×10⁻⁶) |

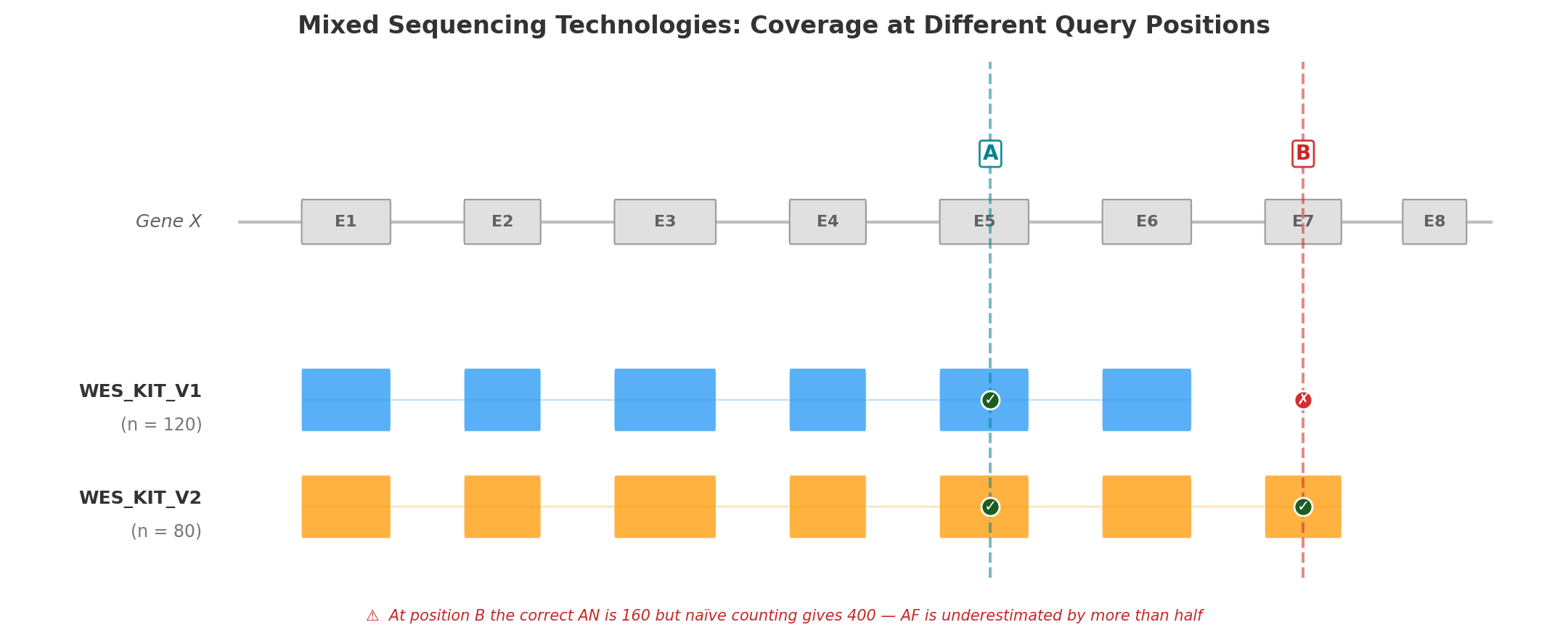

Gap 2 — Mixed Sequencing Technologies Inflate the Denominator

Modern cohorts routinely combine samples sequenced with different technologies — WGS, multiple WES capture kits, and targeted gene panels. Even different versions of the same WES kit can differ by hundreds of base pairs at capture boundaries.

This matters because allele number (AN) must be computed per position, not per cohort. A sample whose capture kit does not cover the queried position has no genotype there: it contributes nothing to AN. If it is naively counted, the denominator is inflated and the resulting AF is artificially deflated.

The figure below illustrates the problem with a minimal example: two versions of the same WES capture kit (V1, n = 120 and V2, n = 80) sequencing a schematic gene with eight exons.

Position A (Exon 5) falls within the capture region of both kit versions. All 200 samples have a genotype at this position, so AN = 200 × 2 = 400. Both kits contribute to the count, and the resulting AF is correct.

Position B (Exon 7) is covered only by WES_KIT_V2. The 120 samples sequenced with V1 have no data at this position. The correct AN is 80 × 2 = 160 — but a naïve calculation that ignores capture boundaries would use 200 × 2 = 400, underestimating AF by more than half. A variant with AC = 5 would appear to have AF = 1.25% (5/400) instead of the true 3.13% (5/160) — enough to cross PM2 filtering thresholds and mislead clinical interpretation.

This is not hypothetical. In the Alzheimer's Disease Sequencing Project, hidden variant-level batch effects between two exome capture kits significantly impacted disease-associated variant identification, with a subset of top risk variants originating exclusively from one kit (Wickland et al., 2021). A population-based WES study found that separating samples by capture protocol yielded 40.9% more high-quality variants than pooling them (Carson et al., 2014).

No general-purpose VCF tool automates per-position, per-technology AN computation across dozens of BED files.

Gap 3 — Static Tools Require Reprocessing

Standard VCF tools — bcftools, VCFtools, GATK — operate on static VCF files. Computing AF over a different sample subset requires:

- Selecting samples by external metadata

- Subsetting the VCF

- Running AF computation

- Repeating for each new subset

This is adequate for one-time analyses but prohibitive for interactive clinical variant interpretation, where a geneticist may need AF across dozens of subsets in a single session: by sex, by phenotype, by technology, by combinations thereof.

For a cohort of 10,000 samples with 5 million variants, reprocessing takes minutes per subset. At 20 subsets per clinical session, the wall time is measured in hours — turning what should be an interactive workflow into a batch job.

Gap 4 — No Metadata-Aware Filtering

Computing AF over "female WGS samples tagged with phenotype E11.9, excluding those also tagged I42" requires orchestrating multiple tools: extract sample IDs from a metadata database, subset the VCF, compute statistics. This multi-step process is error-prone (sample ID mismatches, off-by-one in subsetting) and precludes real-time exploratory analysis.

The lack of integrated metadata filtering is particularly problematic for:

- Pseudo-control analysis — computing AF in all samples except those with a specific disease to assess case enrichment (ACMG PS4)

- Sex-stratified AF — essential for X-linked variant interpretation, where males and females have different ploidy

- Technology-stratified QC — identifying variants that appear only in one sequencing technology, suggesting artifacts rather than true variation

How AFQuery Addresses These Gaps

AFQuery introduces a pre-indexed database architecture that separates the slow step (building the genotype index from VCFs) from the fast step (querying AF on arbitrary subgroups).

The key data structure is the Roaring Bitmap — a compressed bitset that records, for each variant, which samples carry the alternate allele. At query time, computing AC/AN/AF requires only:

- Loading the variant's carrier bitmap from Parquet storage

- Intersecting with the bitmap of eligible samples (determined by sex, phenotype, and capture filters)

- Counting set bits (popcount)

This reduces each query to microsecond-scale bitmap operations, achieving sub-100 ms end-to-end latency including Parquet I/O — regardless of cohort size.

| Gap | Existing tools | AFQuery |

|---|---|---|

| Population-specific AF | Compare against gnomAD; build separate databases per population | Compute AF on any phenotype-defined subgroup at query time |

| Mixed technologies | Manual BED intersection or ignore the problem | Automatic per-position, per-technology AN via capture index |

| Reprocessing | Re-scan VCF per subset (minutes) | Bitmap intersection (milliseconds) |

| Metadata filtering | Multi-step: extract IDs → subset VCF → compute | Single query with --phenotype, --sex, --tech flags |

Next Steps

- Installation — get started

- Key Concepts — how bitmaps, Parquet, and metadata filtering work together

- ACMG Criteria — applying local AF to BA1, PM2, and PS4

Installation

Requirements

- Python 3.10 or later

- For the build phase (

create-db): 2–8 GB RAM per worker (see Performance Tuning)

pip

To install the latest development version directly from GitHub:

conda / mamba (bioconda)

Or with mamba (faster):

Docker

Official images are published to GitHub Container Registry for every release.

Run the CLI by mounting a local directory with your database:

docker run --rm \

-v /path/to/db:/db \

ghcr.io/babelomics/afquery:latest \

query --db /db --locus chr1:925952 --phenotype E11.9

Note

Images are available for linux/amd64 and linux/arm64. The latest tag always points to the most recent stable release.

From Source

To also install documentation dependencies:

Optional: Documentation Dependencies

If you want to build or serve the documentation locally:

Next Steps

- VCF Preprocessing — normalize and prepare VCFs before ingestion

- 5-Min Quickstart — build your first database and run queries

- Key Concepts — understand the bitmap index, manifest, and metadata model

Quickstart

This tutorial walks through building a small AFQuery database and running your first queries. It takes about 5 minutes.

New to AFQuery?

If you want to understand how bitmaps, Parquet storage, and metadata filtering work together before diving in, read Key Concepts first. Otherwise, follow along — you can always come back to the theory later.

Normalize your VCFs first

AFQuery works best with normalized, left-aligned VCFs with ploidy-corrected sex chromosome calls. See VCF Preprocessing for a reference normalization pipeline using bcftools.

1. Create a Manifest

The manifest is a TSV file describing your initial cohort. Create manifest.tsv:

sample_name vcf_path sex tech_name phenotype_codes

SAMPLE_001 /data/vcfs/sample001.vcf.gz female wgs E11.9,I10

SAMPLE_002 /data/vcfs/sample002.vcf.gz male wgs E11.9

SAMPLE_003 /data/vcfs/sample003.vcf.gz female wes_v1 I10

Fields:

sample_name: unique identifiervcf_path: path to single-sample VCF (plain or.gz)sex:maleorfemaletech_name: sequencing technology name. UseWGSfor whole genome sequencingphenotype_codes: comma-separated phenotype codes (arbitrary strings)

See Manifest Format for full details.

2. Create the Database

afquery create-db \

--manifest manifest.tsv \

--output-dir ./my_db/ \

--genome-build GRCh38 \

--bed-dir ./beds/ \

--threads 12

BED files are required for WES and panel technologies. Place one file per technology in the --bed-dir directory, named <tech_name>.bed (e.g., wes_v1.bed). WGS technologies do not need a BED file.

The command will:

1. Ingest all VCFs

2. Build Roaring Bitmap Parquet files per chromosome/bucket

3. Write manifest.json and metadata.sqlite

3. Inspect the Database (optional)

4. Query a Single Position

Example output:

Filter to female samples with a specific phenotype:

See Sample Filtering for the full include/exclude syntax.

Warnings for missing data

If a phenotype code, technology name, or chromosome is not found in the database, afquery prints a warning to stderr and returns empty results. Use --no-warn to suppress these warnings.

5. Inspect Carriers (optional)

See which samples carry the variant you just queried:

This lists each carrier with their sex, technology, phenotype codes, genotype (het/hom), and FILTER status. See Variant Info for details.

6. Query a Region

7. Annotate a VCF

Given a VCF with variants you want to annotate:

The output VCF gains INFO fields (see Annotate a VCF for parallelism options and downstream usage):

| Field | Number | Description |

|---|---|---|

AFQUERY_AC |

A (per ALT) | Allele count |

AFQUERY_AN |

1 (per site) | Allele number |

AFQUERY_AF |

A (per ALT) | Allele frequency |

AFQUERY_N_HET |

A (per ALT) | Heterozygous sample count |

AFQUERY_N_HOM_ALT |

A (per ALT) | Homozygous alt sample count |

AFQUERY_N_HOM_REF |

A (per ALT) | Homozygous ref sample count |

AFQUERY_N_FAIL |

1 (per site) | Samples with FILTER≠PASS |

AFQUERY_N_NO_COVERAGE |

A (per ALT) | Eligible samples lacking coverage evidence (0 unless a coverage-evidence filter is active) |

Next Steps

- Key Concepts — understand how bitmaps, Parquet, and metadata filtering work together

- Sample Filtering — full syntax for phenotype, sex, and technology filters

- Variant Info — list carriers of any variant with metadata

- Annotate a VCF — annotation options, parallelism, and downstream usage

- ACMG Criteria — applying local AF to BA1, PM2, and PS4

Tutorial: End-to-End Walkthrough

This tutorial walks through every major AFQuery feature using a synthetic demo dataset. By the end, you will have built a database, queried variants, filtered by metadata, annotated a VCF, and exported results.

Prerequisites

Make sure AFQuery is installed. See Installation if needed.

1. Generate Demo Data

AFQuery ships with a script that creates 10 synthetic VCFs, a manifest, and BED files for two WES technologies:

This creates examples/demo/demo_output/ with:

vcfs/— 10 single-sample VCFs (DEMO_001 through DEMO_010)beds/— capture BED files forwes_v1andwes_v2manifest.tsv— sample metadata

The demo cohort has 4 WGS samples, 3 wes_v1 samples, and 3 wes_v2 samples, with phenotype codes E11.9, I10, and control. It includes a set of variants at fixed positions so all query examples in this tutorial produce reproducible output.

2. Create the Database

afquery create-db \

--manifest examples/demo/demo_output/manifest.tsv \

--output-dir ./demo_db/ \

--genome-build GRCh38 \

--bed-dir examples/demo/demo_output/beds/

This ingests all VCFs, builds Roaring Bitmap Parquet files, and writes manifest.json and metadata.sqlite.

3. Inspect the Database

Example output:

Database: ./demo_db/

Version: 1.0

Genome build: GRCh38 Schema: 2.0

Created: ... Updated: N/A

Samples: 10 total

By sex: female=5 male=5

By tech: wes_v1=3 wes_v2=3 wgs=4

By phenotype: E11.9=4 I10=5 control=4

The 10 samples span 3 technologies. Phenotype counts reflect samples tagged with each code; a sample may have multiple codes.

4. Query a Single Variant

Query the variant at chr1:925952:

Example output:

Reading the output

Every query result includes the following fields:

| Field | Description |

|---|---|

| AC | Allele count — alt allele copies in eligible samples |

| AN | Allele number — total alleles examined (adjusted for coverage and ploidy) |

| AF | Allele frequency — AC / AN |

| n_eligible | Eligible samples at this position — those passing the metadata filters and covered here. Ploidy is applied afterwards, to AN. Here, 10 = no filter applied and every sample is covered. |

| N_HET | Eligible samples heterozygous for the alt allele |

| N_HOM_ALT | Eligible samples homozygous for the alt allele |

| N_HOM_REF | Eligible samples homozygous reference |

| N_FAIL | Eligible samples with a non-ref allele called but FILTER≠PASS in the source VCF |

For chr1:925952: AN=20 = 10 diploid samples × 2 alleles; AC=6 = 4 het samples (1 copy each) + 1 hom-alt (2 copies).

Accounting identity: n_eligible = N_HET + N_HOM_ALT + N_HOM_REF + N_FAIL + N_NO_COVERAGE always holds. N_NO_COVERAGE is 0 here (it only becomes non-zero with a coverage-evidence filter), so 10 = 4+1+5+0+0 ✓.

N_FAIL in practice

Some variants have samples whose call passed genotyping but failed a quality filter in the source VCF. Query the variant at chr1:946000:

Example output:

Here, 2 samples were flagged as LowQual in their source VCF at this position. N_FAIL samples are:

- Counted in AN — they are eligible and contribute to the denominator

- Excluded from AC — their alleles are not counted in the numerator

This makes AF a conservative estimate: AF=2/20=0.1000 even though 2 additional samples may carry the variant. Verify: 10 = 2+0+6+2 ✓.

Tip

N_FAIL > 0 is a signal to inspect source VCF quality at this site. A high N_FAIL relative to n_eligible may indicate a systematic sequencing artifact. See Understanding Output for thresholds.

Region query

To find all variants in the WES capture region:

Example output:

chr1:925100 C>T AC=4 AN=20 AF=0.2000 n_eligible=10 N_HET=4 N_HOM_ALT=0 N_HOM_REF=6 N_FAIL=0

chr1:925952 G>A AC=6 AN=20 AF=0.3000 n_eligible=10 N_HET=4 N_HOM_ALT=1 N_HOM_REF=5 N_FAIL=0

chr1:946000 T>C AC=2 AN=20 AF=0.1000 n_eligible=10 N_HET=2 N_HOM_ALT=0 N_HOM_REF=6 N_FAIL=2

5. Inspect Variant Carriers

After finding a variant of interest, use variant-info to see which specific samples carry it:

Example output:

sample_id sample_name sex tech phenotypes genotype filter

--------- ----------- ------ ------ --------------- -------- ------

0 DEMO_001 female wgs E11.9,I10 het PASS

2 DEMO_003 male wgs E11.9 het PASS

4 DEMO_005 female wes_v1 E11.9,control het PASS

6 DEMO_007 male wes_v2 control het PASS

8 DEMO_009 female wgs E11.9 hom PASS

Each row is one carrier. The genotype column shows het (heterozygous), hom (homozygous alt), or alt (non-ref with FILTER≠PASS). The filter column indicates whether the call passed quality filters in the source VCF.

For machine-readable output, use --format tsv:

See Variant Info for full options including allele-specific queries and sample filtering.

6. Filter by Sex

Query only female samples:

Example output:

Now query male samples:

Example output:

AN drops from 20 to 10 in both cases because only 5 samples are eligible. The AF happens to be identical here (0.3000), but the genotype distributions differ: the male group has one homozygous-alt carrier while the female group has three heterozygous carriers.

7. Filter by Phenotype

Query samples tagged with E11.9:

Example output:

Exclude control samples:

Example output:

The ^ prefix means "exclude". Excluding controls removes 4 samples, leaving 6. See Sample Filtering for the full syntax.

8. Filter by Technology

Restrict to WGS samples only:

Example output:

Now query each WES technology separately:

chr1:925952 falls inside the chr1:900000-950000 capture region shared by both WES kits. All 3 wes_v1 samples and all 3 wes_v2 samples are covered, so AN=6 (3 samples × 2 alleles) for both.

The critical case: a position outside all WES capture regions

Now query a position that is not in any WES BED file:

chr1:1399914 lies between the two chr1 WES capture regions (900000–950000 and 1200000–1250000). When you restrict to --tech wes_v1, AFQuery excludes all wes_v1 samples from AN because none of them have coverage at this position — AN=0, so no results are returned.

No variants found ≠ rare

"No variants found" here means AFQuery has no coverage data for the requested technology at this position. The variant exists in the database (visible with --tech wgs), but frequency cannot be estimated for wes_v1. Always check that AN is sufficient before interpreting AF.

9. Combine Filters

All filter dimensions compose with AND:

afquery query \

--db ./demo_db/ \

--locus chr1:925952 \

--sex female \

--phenotype E11.9 \

--tech wgs

Example output:

Only one sample meets all three criteria (DEMO_001: female, wgs, E11.9). With n_eligible=1, AN=2 (one diploid sample on an autosome) and AC=1 (heterozygous carrier).

10. Annotate a VCF

Use one of the demo VCFs as input:

afquery annotate \

--db ./demo_db/ \

--input examples/demo/demo_output/vcfs/DEMO_001.vcf.gz \

--output ./annotated_demo.vcf

The output VCF gains AFQUERY_AC, AFQUERY_AN, AFQUERY_AF, AFQUERY_N_HET, AFQUERY_N_HOM_ALT, AFQUERY_N_HOM_REF, and AFQUERY_N_FAIL INFO fields. Inspect the chr1 variants:

Example output (columns: CHROM, POS, ID, REF, ALT, QUAL, FILTER, INFO, FORMAT, DEMO_001):

chr1 925100 . C T 50 PASS AFQUERY_AC=4;AFQUERY_AN=20;AFQUERY_AF=0.2;AFQUERY_N_HET=4;AFQUERY_N_HOM_ALT=0;AFQUERY_N_HOM_REF=6;AFQUERY_N_FAIL=0 GT 0/1

chr1 925952 . G A 50 PASS AFQUERY_AC=6;AFQUERY_AN=20;AFQUERY_AF=0.3;AFQUERY_N_HET=4;AFQUERY_N_HOM_ALT=1;AFQUERY_N_HOM_REF=5;AFQUERY_N_FAIL=0 GT 0/1

chr1 946000 . T C 50 PASS AFQUERY_AC=2;AFQUERY_AN=20;AFQUERY_AF=0.1;AFQUERY_N_HET=2;AFQUERY_N_HOM_ALT=0;AFQUERY_N_HOM_REF=6;AFQUERY_N_FAIL=2 GT 0/1

AFQUERY_N_FAIL=2 for chr1:946000 matches the query output from Step 4. The annotated values reflect cohort-wide frequencies across all 10 samples, not just DEMO_001.

See Annotate a VCF for filtering and downstream usage.

11. Bulk Export with Dump

Export all variant frequencies to CSV:

Preview the first rows:

Example output:

chrom,pos,ref,alt,AC,AN,AF,N_HET,N_HOM_ALT,N_HOM_REF,N_FAIL

chr1,925100,C,T,4,20,0.2,4,0,6,0

chr1,925952,G,A,6,20,0.3,4,1,5,0

chr1,946000,T,C,2,20,0.1,2,0,6,2

chr1,1225000,A,G,5,20,0.25,5,0,5,0

chr1,1399914,G,T,2,8,0.25,2,0,2,0

Note that chr1:1399914 has AN=8 even in the full-cohort dump — technology-aware AN is applied automatically (WES samples are excluded for positions outside their capture regions).

Disaggregate by sex and technology:

This adds columns following the pattern AC_{sex}_{tech}, AN_{sex}_{tech}, AF_{sex}_{tech} (and genotype counts) for each sex-technology combination. The stratified dump is useful for case-control comparisons and population-specific frequency analysis.

12. Interpret Results with ACMG Criteria

With the annotated VCF or query results, you can apply ACMG criteria:

- BA1: Is AF > 5% in your cohort? → Variant is benign.

- PM2_Supporting: Is the variant absent (AC=0) with high AN? → Supporting pathogenic evidence.

- PS4: Is the variant enriched in cases vs. controls? Use

--phenotypeand--phenotype ^disease_codeto compare.

For detailed guidance, see ACMG Criteria (BA1/PM2/PS4).

Next Steps

- Key Concepts — understand how bitmaps, Parquet, and metadata filtering work

- Understanding Output — field definitions and interpretation of N_FAIL, AN=0, and other special cases

- Manifest Format — prepare your own cohort manifest

- Create a Database — build a database from your real data

- ACMG Criteria — apply local AF to variant classification

Key Concepts

Mental Model

AFQuery = bitmap index over genotypes.

For each variant, AFQuery stores a compressed bitset recording which samples carry the alt allele. For each query, it intersects the carrier bitset with an eligible-sample bitset, counts bits, and returns AC/AN/AF.

Samples → Metadata → Filters → Eligible Bitmap

Variants → Genotypes → het/hom Bitmaps

Query: (eligible bitmap) AND (carrier bitmap) → AC; count(eligible) → AN; AC/AN → AF

flowchart LR

subgraph Input

S["Samples + Metadata"]

V["Variants + Genotypes"]

end

subgraph Bitmaps

E["Eligible Bitmap<br/>(sex, phenotype, tech filters)"]

C["Carrier Bitmap<br/>(het | hom per variant)"]

end

subgraph Result

R["AC = popcount(eligible AND carrier)<br/>AN = popcount(eligible) × ploidy<br/>AF = AC / AN"]

end

S --> E

V --> C

E --> R

C --> R

style E fill:#e3f2fd

style C fill:#f3e5f5

style R fill:#e8f5e9Why Cohort-Specific AF Matters

Allele frequency is not a fixed property of a variant — it is a property of a population. Frequencies vary substantially across ancestry, sequencing technology, and clinical composition. Global databases like gnomAD are invaluable but may not reflect your cohort's background, leading to misestimated AF and incorrect variant classification.

AFQuery lets you compute allele frequencies on exactly the samples in your hands — and on any dynamically defined subset of them — without rebuilding the database.

For a detailed discussion of the methodological gaps AFQuery addresses, including real-world reclassification examples and peer-reviewed references, see Why Local Allele Frequencies Matter.

Allele Frequency (AC / AN / AF)

For a given variant (chromosome, position, ref, alt):

- AC (Allele Count) — number of copies of the alt allele observed across eligible samples

- AN (Allele Number) — total number of alleles examined (2× diploid samples, 1× haploid)

- AF (Allele Frequency) —

AC / AN(None when AN = 0)

A heterozygous carrier contributes AC=1; a homozygous alt carrier contributes AC=2.

Ploidy

AN depends on the chromosome and sex of eligible samples:

| Chromosome | Female | Male |

|---|---|---|

| Autosomes (chr1–22) | 2 | 2 |

| chrX (non-PAR) | 2 | 1 |

| chrX (PAR1/PAR2) | 2 | 2 |

| chrY | 0 | 1 |

| chrM | 1 | 1 |

See Ploidy & Special Chromosomes for PAR coordinates.

Worked Example

Consider a cohort of 60 samples (50 diploid autosomes) with a variant at position chr1:925952 G>A:

| Group | Eligible samples | AN | AC | AF |

|---|---|---|---|---|

| Full cohort (all 60) | 60 samples | 120 | 3 | 0.025 |

| Females only (35) | 35 samples | 70 | 2 | 0.029 |

Tagged E11.9 (20) |

20 samples | 40 | 0 | 0.000 |

Not tagged E11.9 (40) |

40 samples | 80 | 3 | 0.038 |

Tip

AN is not always 2 × cohort_size. Eligible samples change per query, so AN reflects your chosen subgroup exactly.

Visualization

The same variant can show dramatically different allele frequencies across subgroups:

graph TB

subgraph Full["Full Cohort (60 samples)"]

F["AC=3, AN=120, AF=0.025"]

end

subgraph Females["Females Only (35 samples)"]

FEM["AC=2, AN=70, AF=0.029"]

end

subgraph Disease["Tagged E11.9 (20 samples)"]

DIS["AC=0, AN=40, AF=0.000"]

end

subgraph Controls["Without E11.9 (40 samples)"]

CTL["AC=3, AN=80, AF=0.038"]

end

Full -->|filter| Females

Full -->|stratify| Disease

Full -->|exclude| Controls

style F fill:#e3f2fd

style FEM fill:#f3e5f5

style DIS fill:#ffebee

style CTL fill:#e8f5e9How AFQuery Stores Data

Per-Variant Bitmaps

For each variant row, AFQuery stores per-sample genotype information as Roaring Bitmaps. Three of them drive every query:

het_bitmap— sample is heterozygous (GT=0/1) withFILTER=PASShom_bitmap— sample is homozygous alt (GT=1/1, orGT=1on haploid regions) withFILTER=PASSfail_bitmap— the sample's call at this site hasFILTER≠PASS

Each sample has a stable integer ID (0-indexed). The bit position in the bitmap equals the sample ID. Databases built with coverage-quality filters carry two additional bitmaps — see Data Model.

Parquet Storage

Bitmaps are serialized and stored in Parquet files, partitioned by chromosome and 1-Mbp bucket:

variants/

chr1/

bucket_0.parquet ← positions 0–999,999

bucket_1.parquet ← positions 1,000,000–1,999,999

...

chr2/

...

Rows within each bucket are sorted by (pos, alt).

Capture Index (WES)

For whole-exome sequencing (WES) and gene panels technologies, a BED file defines covered regions. AFQuery builds an interval tree (pickle file) per technology so queries can determine which WES samples are eligible at any given position.

WGS samples are always eligible (no BED file needed).

The Manifest

The manifest is a TSV file that drives database creation. It maps each sample to its:

- VCF file path

- Sex (

male/female) - Sequencing technology

- Phenotype codes (arbitrary strings, comma-separated)

The manifest is parsed into metadata.sqlite during create-db. The original path is recorded in manifest.json.

Sample Filtering Model

Queries can restrict the eligible sample set along three independent dimensions:

| Dimension | Filter | Default |

|---|---|---|

| Sex | male, female, or both |

both |

| Phenotype | Include/exclude phenotype codes (arbitrary strings) | all samples |

| Technology | Include/exclude tech names | all samples |

Filters compose with AND across dimensions: a sample must satisfy all three to be eligible.

Within a dimension, multiple include codes compose with OR (a sample matching any code is included).

AN is computed only over eligible samples, so AF naturally reflects the chosen subgroup.

The Metadata Model

AFQuery treats phenotype codes as arbitrary string labels. You can use:

- ICD-10 disease codes (International Classification of Diseases, 10th revision) — e.g.,

E11.9,G40 - Human Phenotype Ontology (HPO) terms — e.g.,

HP:0001250 - OMIM entries (Online Catalog of Human Genes and Genetic Disorders) — e.g.,

OMIM:143100 - Custom project tags (

control,rare_disease,pilot_cohort) - Technology subgroups (

panel_v1,panel_v2) - Any combination of the above

Multiple labels per sample are supported. There is no validation or controlled vocabulary — you define the ontology for your cohort.

When planning phenotype codes, consider:

- Codes can be updated after ingestion using

afquery update-db --update-sample(see Updating sample metadata) - Codes are case-sensitive:

E11.9≠e11.9 - Trailing or leading spaces cause silent mismatch (always use

E11.9,I10, neverE11.9, I10)

PASS-only ingestion is always enforced. See FILTER=PASS Tracking for details.

Next Steps

- 5-Min Quickstart — build your first database and run queries

- Sample Filtering — phenotype, sex, and technology filter syntax

- Ploidy & Special Chromosomes — PAR regions and ploidy rules in detail

VCF Preprocessing

AFQuery ingests single-sample, normalized VCF files. While AFQuery itself does not perform VCF normalization, the accuracy of your allele frequency estimates depends on the quality and consistency of your input VCFs. This page explains why normalization matters and provides a reference pipeline.

A ready-to-use normalization script is provided at resources/normalize_vcf.sh.

Why Normalize?

Left-alignment and decomposition

Multi-allelic variants and complex indels can be represented in multiple equivalent ways in VCF format. Without normalization:

- The same deletion may appear as different ALT alleles depending on the caller

- Multi-allelic sites may not be decomposed into biallelic records

- Duplicate records can inflate AC

bcftools norm left-aligns indels against the reference genome and decomposes multi-allelic sites, ensuring consistent representation across samples.

Ploidy correction for sex chromosomes

Male samples should have haploid genotype calls at chrX non-PAR regions (GT=1, not GT=0/1 or 1/1). Many variant callers output diploid genotypes for males at chrX by default. Without ploidy correction:

- Males at chrX are counted as diploid → AN is inflated by up to 50%

- AF estimates for X-linked variants are systematically underestimated

bcftools +fixploidy corrects genotype ploidy based on a sex file.

Filtering and stripping

- Non-PASS genotypes: Masking them as missing ensures that low-quality calls do not inflate AC. AFQuery tracks these as N_FAIL.

- Homozygous reference calls: Removing ref/ref genotypes reduces file size and speeds ingestion; they contribute AC=0 and are not needed

- INFO fields: Stripping INFO reduces file size and speeds ingestion. Additionally, malformed or non-standard INFO fields produced by some variant callers can break downstream parsing; stripping them pre-emptively prevents these errors.

- FORMAT fields: Only

GT,DP, andGQare preserved.GTis the genotype required by AFQuery for all queries.DPandGQare read by the coverage-evidence quality flags (afquery create-db --min-dp / --min-gq / --min-qual / --min-covered). VCFs withoutDP/GQare still valid; their carriers simply contribute no quality evidence to the cohort. All other FORMAT fields (PL,AD, etc.) are dropped to reduce file size.

Next Steps

- Manifest Format — describe your cohort samples for ingestion

- Create a Database — build the AFQuery database from normalized VCFs

- 5-Min Quickstart — end-to-end tutorial from manifest to first query

Understanding Output

This page explains what each field in AFQuery output means and how to interpret special cases.

Output Fields

| Field | Type | Description |

|---|---|---|

| AC | int | Allele count — number of alt allele copies in eligible samples |

| AN | int | Allele number — total alleles examined (adjusted for ploidy and eligible samples) |

| AF | float | Allele frequency — AC / AN. None when AN=0 |

| N_HET | int | Number of eligible samples heterozygous for the alt allele (GT=0/1) |

| N_HOM_ALT | int | Number of eligible samples homozygous for the alt allele (GT=1/1 or GT=1). Includes haploid carriers on sex chromosomes and chrM. See Ploidy. |

| N_HOM_REF | int | Number of eligible samples homozygous reference (GT=0/0 or GT=0) |

| n_eligible | int | Number of eligible samples — those passing the sex/phenotype/tech filters and covered at this position |

| N_FAIL | int | Number of eligible samples whose call at this position had FILTER≠PASS. These samples are counted only in N_FAIL — not in N_HET, N_HOM_ALT, or N_HOM_REF — but they stay eligible and still count toward AN. |

| N_NO_COVERAGE | int | Number of eligible samples whose tech lacks coverage evidence at this position. Excluded from N_HOM_REF to keep AC/AN conservative. Always 0 unless a coverage-evidence filter is active. See Coverage Evidence. |

Output Formats

Text (default)

chr1:925952 G>A AC=3 AN=120 AF=0.0250 n_eligible=60 N_HET=1 N_HOM_ALT=1 N_HOM_REF=57 N_FAIL=0 N_NO_COVERAGE=0

TSV

chrom pos ref alt AC AN AF n_eligible N_HET N_HOM_ALT N_HOM_REF N_FAIL N_NO_COVERAGE

chr1 925952 G A 3 120 0.025000 60 1 1 57 0 0

JSON

{

"chrom": "chr1",

"pos": 925952,

"ref": "G",

"alt": "A",

"AC": 3,

"AN": 120,

"AF": 0.025,

"n_eligible": 60,

"N_HET": 1,

"N_HOM_ALT": 1,

"N_HOM_REF": 57,

"N_FAIL": 0,

"N_NO_COVERAGE": 0

}

Special Cases

AN=0 and AF=None

AN=0 means no eligible samples have coverage at this position. This happens when:

- All eligible samples are WES or panels and the position is outside capture regions

- The phenotype/sex/technology filter excludes all samples

- The chromosome is not in the database

AN=0 does not mean the variant is absent

AN=0 means AFQuery has no data to compute frequency. It is not evidence of rarity.

Warnings

afquery emits a AfqueryWarning to stderr when a query may silently return fewer or no results. Common causes:

| Situation | Warning message |

|---|---|

| Chromosome not in database | Chromosome 'chrXX' has no data in this database. Available: [...] |

| Unknown phenotype code (include) | Phenotype 'CODE' not in database — include will match 0 samples. |

| Unknown phenotype code (exclude) | Phenotype 'CODE' not in database — exclude has no effect. |

| Unknown technology name (include) | Technology 'NAME' not in database — include will match 0 samples. |

| Unknown technology name (exclude) | Technology 'NAME' not in database — exclude has no effect. |

| Contradictory filters (e.g. include + exclude same code) | Sample filter produces an empty eligible set — all queries will return AN=0. |

Use --no-warn to suppress these warnings:

afquery query --db ./my_db/ --locus chr22:1000 --no-warn

afquery annotate --db ./my_db/ --input in.vcf --output out.vcf --no-warn

AC=0 with High AN

AC=0 with a high AN (e.g., AN=4000) means the variant was genuinely not observed in a well-covered cohort. This is meaningful evidence that the variant is rare or absent in your population.

N_FAIL > 0

When N_FAIL > 0, some eligible samples had a non-PASS filter at this position in their source VCF. A high N_FAIL relative to AN may indicate a problematic site (e.g., systematic sequencing artifacts). Consider filtering positions with more than 10% of failing (N_FAIL / (AN / 2) > 0.1).

VCF Annotation Fields

When using afquery annotate, the following INFO fields are added to each variant:

| INFO field | Number | Description |

|---|---|---|

AFQUERY_AC |

A (per ALT) | Allele count — one value per ALT allele |

AFQUERY_AN |

1 (per site) | Allele number — shared across all ALT alleles |

AFQUERY_AF |

A (per ALT) | Allele frequency — one value per ALT allele |

AFQUERY_N_HET |

A (per ALT) | Heterozygous sample count per ALT allele |

AFQUERY_N_HOM_ALT |

A (per ALT) | Homozygous alt sample count per ALT allele |

AFQUERY_N_HOM_REF |

A (per ALT) | Homozygous ref sample count per ALT allele |

AFQUERY_N_FAIL |

1 (per site) | Fail sample count — shared across all ALT alleles |

AFQUERY_N_NO_COVERAGE |

A (per ALT) | Eligible samples whose tech lacks coverage evidence at this position. Always 0 unless a coverage-evidence filter is active. See Coverage Evidence. |

Multi-allelic sites

Number=A fields have one value per ALT allele (comma-separated for multi-allelic sites). Number=1 fields are shared across all ALT alleles at the same position.

These fields can be used directly in downstream filtering with bcftools filter:

# Keep variants rare in cohort with sufficient coverage

bcftools filter -i 'AFQUERY_AF < 0.001 && AFQUERY_AN >= 1000' annotated.vcf.gz

Next Steps

- Key Concepts — how AC, AN, and AF are computed

- Sample Filtering Guide — phenotype, sex, and technology filters

- Annotate a VCF — add AFQUERY_AF/AC/AN fields to a patient VCF

- Clinical ACMG Use Cases — applying local AF to ACMG BS1/PM2 criteria

User Guides

🧬 Bioinformatics Workflows

Create a Database

afquery create-db builds the AFQuery database from a manifest of single-sample VCFs. This is a one-time setup step; incremental updates use afquery update-db.

Basic Usage

For cohorts with WES/panel samples, provide the BED file directory:

afquery create-db \

--manifest manifest.tsv \

--output-dir ./db/ \

--genome-build GRCh38 \

--bed-dir ./beds/

What Happens

- Ingest phase — Each VCF is parsed with cyvcf2. Genotypes and quality fields are written to a temporary per-sample Parquet file, one row per variant per sample.

- Build phase — DuckDB reads the temporary Parquet files, aggregates per 1-Mbp bucket, and writes Roaring Bitmap Parquet files partitioned by chromosome and bucket.

- Finalize —

manifest.jsonandmetadata.sqliteare written to the output directory.

Directory Layout After Creation

./db/

├── manifest.json # Build configuration (genome build, schema version, etc.)

├── metadata.sqlite # Sample/phenotype/technology/changelog metadata

├── variants/ # Parquet files partitioned by chromosome and bucket

│ ├── chr1/

│ │ ├── bucket_0.parquet # Positions 0–999,999

│ │ ├── bucket_1.parquet # Positions 1,000,000–1,999,999

│ │ └── ...

│ ├── chr2/

│ └── ...

└── capture/ # Interval trees for WES technologies (pickle files)

├── wes_v1.pkl

└── wes_v2.pkl

Memory and Thread Tuning

For large cohorts, tune these options:

afquery create-db \

--manifest manifest.tsv \

--output-dir ./db/ \

--genome-build GRCh38 \

--build-threads 32 \

--build-memory 4GB

| Option | Default | Recommendation |

|---|---|---|

--build-threads |

all CPUs | Set to min(cpu_count, available_RAM_GB / 2) |

--build-memory |

2GB |

Increase for dense WGS regions or large cohorts |

--threads |

all CPUs | Controls ingest parallelism (VCF parsing) |

The build phase uses one DuckDB process per 1-Mbp bucket. With --build-threads 32 and --build-memory 4GB, peak RAM usage is approximately 32 × 4 = 128 GB.

Resume Behavior

If create-db is interrupted, it resumes automatically from where it left off. Individual bucket Parquet files that were already written are skipped.

To force a complete restart:

Warning

--force deletes all existing output in --output-dir. Use with caution.

FILTER=PASS Behavior

Only FILTER=PASS calls (or calls with no FILTER field) contribute to AC, and therefore to AF. AN is not affected — it counts every eligible sample, failed calls included. Calls that fail a filter are tracked in fail_bitmap and surfaced as N_FAIL. PASS-only counting is always enforced — there is currently no CLI option to change this behaviour.

See FILTER=PASS Tracking for details.

Coverage-Evidence Filters

Four optional flags enable per-sample, quality-aware tracking of which positions each partially-covered technology (WES, panels) actually covered. They are fully opt-in.

| Flag | Default | Effect |

|---|---|---|

--min-dp D |

0 | Minimum FORMAT/DP for a carrier to count as quality evidence. |

--min-gq G |

0 | Minimum FORMAT/GQ for a carrier to count as quality evidence. |

--min-qual Q |

0.0 | Minimum VCF QUAL field for a carrier to count as quality evidence. |

--min-covered K |

0 | Per partially-covered tech, the position is "trusted" only if at least K of its carriers pass the quality thresholds. Non-carriers of failing positions are recorded as N_NO_COVERAGE. |

When any of these flags is non-zero AFQuery reads FORMAT/DP, FORMAT/GQ,

and QUAL from each variant call during ingest. Use the bundled

resources/normalize_vcf.sh (which preserves these FORMAT fields) or ensure

your own preprocessing keeps them.

Example:

afquery create-db \

--manifest samples.tsv \

--output-dir ./db/ \

--genome-build GRCh38 \

--bed-dir ./beds/ \

--min-dp 30 --min-gq 20 --min-covered 1

Thresholds are fixed at creation time. update-db --add-samples reuses them

and re-applies them to every position whose partially-covered tech receives

new samples (see Update Database).

See Coverage Evidence for when to reach

for each flag, how N_NO_COVERAGE is computed, and the query-time companion

flag --min-quality-evidence.

Validating the Result

After creation, run:

And inspect database metadata:

Example info output:

Database: ./db/

Schema version: 2.0

Genome build: GRCh38

DB version: 1.0

Samples: 1371

Technologies: wgs, wes_v1, wes_v2

Chromosomes: chr1 ... chrX chrY chrM

These commands are available at any time after database creation — not only immediately after create-db. Use afquery check to verify database integrity (manifest consistency, Parquet file health, capture index presence) and afquery info to inspect sample counts, registered technologies, and phenotype codes.

Full Option Reference

See CLI Reference → create-db.

Next Steps

- Manifest Format — TSV manifest column reference and common mistakes

- Query Allele Frequencies — run your first queries against the new database

- Performance Tuning — build thread and memory configuration for large cohorts

Manifest Format

The manifest is a tab-separated (TSV) file that describes your sample cohort. It is the primary input to afquery create-db and afquery update-db --add-samples.

Column Specification

| Column | Required | Type | Description |

|---|---|---|---|

sample_name |

Yes | string | Unique identifier for the sample. Must be unique across the entire database. |

vcf_path |

Yes | string | Absolute or relative path to the single-sample VCF file (plain or .gz). |

sex |

Yes | male | female |

Biological sex. Used for ploidy-aware AN computation on sex chromosomes. |

tech_name |

Yes | string | Sequencing technology name (e.g., wgs, wes_v1, capture_kit_A). Case-sensitive. |

phenotype_codes |

No | string | Comma-separated phenotype codes for this sample (arbitrary strings, e.g., E11.9,control). |

Example

sample_name vcf_path sex tech_name phenotype_codes

SAMP_001 /data/vcfs/SAMP_001.vcf.gz female wgs E11.9,I10

SAMP_002 /data/vcfs/SAMP_002.vcf.gz male wgs E11.9

SAMP_003 /data/vcfs/SAMP_003.vcf.gz female wes_v1 I10

SAMP_004 /data/vcfs/SAMP_004.vcf.gz male wes_v1

SAMP_005 /data/vcfs/SAMP_005.vcf.gz female wgs E11.9,I10,N18.3

Header row required

The first row must be the header with exactly these column names.

Phenotype Codes

Naming map

The manifest column is phenotype_codes (plural, comma-separated). The CLI flag is --phenotype (singular, repeatable). In documentation text, "phenotype codes" refers to the values stored in this field.

The phenotype_codes column accepts any arbitrary strings — there is no required ontology. Common choices include:

- ICD-10 disease codes (e.g.,

E11.9,I10,N18.3) - HPO phenotype terms (e.g.,

HP:0001250) - Custom project tags (e.g.,

control,pilot,high_coverage_wgs) - Population or batch labels (e.g.,

EUR,batch_2023) - Technology subgroups (e.g.,

panel_v1,panel_v2)

Multiple codes per sample can be provided (comma-separated, no spaces)

Samples with no phenotype can leave the phenotype_codes column empty

Important

phenotype_codes are stored as-is and matched exactly in queries (case-sensitive)

Technology Names

- Technology names are arbitrary strings — use whatever is meaningful for your cohort

- Common conventions:

wgs,wes_v1,wes_v2,capture_twist_v2

WGS is case-insensitive

The technology name WGS is matched case-insensitively: wgs, WGS, Wgs are all recognized as whole-genome sequencing (no BED file required). All other technology names are case-sensitive and must match exactly between the manifest and the --tech query filter.

- WGS technologies (no BED file): all positions are always eligible

- WES technologies (with BED file): coverage is determined by

<tech>.bedin--bed-dir

WES BED files

For any technology that is not WGS, you must provide a BED file named <tech>.bed in

the --bed-dir directory. If the file is not found, AFQuery aborts with an error before

any ingestion begins:

To resolve this, either supply the correct BED file or rename the technology to WGS

in the manifest (no BED file required for WGS).

BED File Format

BED files for WES capture regions must be:

- 0-based, half-open coordinates (standard BED format)

- Tab-separated with at least 3 columns:

chrom,start,end - Chromosome names matching your VCF style (

chr1or1) - Named

<tech_name>.bed(exact match to thetech_namecolumn in the manifest)

Common Mistakes

| Mistake | Effect | Fix |

|---|---|---|

| Spaces instead of tabs | Parse error | Use \t (Tab key) as separator |

Duplicate sample_name |

Ingest error | Each sample must have a unique name |

| Wrong sex value | Silent error (no ploidy adjustment) | Use exactly male or female |

Spaces in phenotype (E11.9, I10) |

Code " I10" stored with leading space |

Use E11.9,I10 (no spaces) |

| Relative VCF path from wrong CWD | File not found error | Use absolute paths or run from the correct directory |

Next Steps

- Create a Database — use the manifest to build the AFQuery database

- Key Concepts — phenotype code model and metadata filtering

- Sample Filtering — how phenotype codes translate to query filters

Update a Database

afquery update-db adds new samples, removes existing samples, updates sample metadata, and compacts the database to reclaim space.

Update Timeline

graph TD

A["Initial Build<br/>1000 samples<br/>DB v1.0"]

B["Add Batch 2<br/>+500 samples<br/>DB v1.1"]

C["Add Batch 3<br/>+300 samples<br/>DB v1.2"]

D["Remove<br/>50 samples<br/>DB v1.3"]

E["Compact<br/>reclaim 2% disk<br/>DB v1.3c"]

A -->|--add-samples batch2.tsv| B

B -->|--add-samples batch3.tsv| C

C -->|--remove-samples SAMP_*| D

D -->|--compact| E

style A fill:#e3f2fd

style B fill:#fff3e0

style C fill:#fff3e0

style D fill:#ffebee

style E fill:#e8f5e9Add Samples

Provide a new manifest TSV with the samples to add:

The new manifest follows the same format as the original (see Manifest Format). New samples are assigned monotonically increasing sample IDs.

To add multiple manifests at once:

For WES samples, provide the BED file directory:

Coverage-evidence handling

If the database was created with --min-dp / --min-gq / --min-qual /

--min-covered, the existing thresholds are read from the database and

re-applied to all samples — old and new. There is no update-db flag to

override them; thresholds are fixed at creation time so that quality

decisions are comparable across batches.

When new carriers push a partially-covered tech above the --min-covered

threshold at positions that were previously below it, those positions are

re-evaluated and their non-carrier samples once again count as N_HOM_REF

instead of N_NO_COVERAGE. The recomputation runs only for chromosomes

touched by the new samples; existing rows on other chromosomes are not

rewritten.

VCFs added via update-db should preserve FORMAT/DP and FORMAT/GQ (the

bundled resources/normalize_vcf.sh does so by default). Samples without

those fields are still merged correctly but contribute no quality evidence.

See Coverage Evidence for the full flag reference and when to use each one.

Remove Samples

Remove one or more samples by name:

Remove multiple samples:

Or repeat the flag:

Note

Removal marks the sample as inactive and clears its bit from all bitmaps. The physical bit position is not reused. Run --compact after removing many samples to reclaim disk space.

Compact

After removing samples, compact the database to remove dead bits and reduce disk usage:

This rewrites all Parquet files, removing bits for deleted samples. For large databases, compact runs in parallel and may take several minutes.

When to Compact

- After removing more than 5–10% of samples

- When disk space is a concern

- Before archiving or sharing the database

Combine Operations

Operations can be combined in a single command:

afquery update-db \

--db ./db/ \

--remove-samples SAMP_OLD_001 \

--add-samples new_cohort.tsv \

--compact

Operations execute in this order: remove → add → compact.

Database Version

By default, the version label auto-increments (e.g., 1.0 → 2.0). Set a custom version:

View Changelog

Every update operation is logged. View the history:

Example output:

v1.0 2026-01-15 create 1371 samples added

v2.0 2026-02-01 add 42 samples added

v2.0 2026-02-15 remove 3 samples removed

v3.0 2026-03-01 compact compacted after removal

Update Sample Metadata

Correct a sample's sex or phenotype_codes without re-ingesting its VCF. Precomputed bitmaps are regenerated and the change is logged in the changelog.

Single sample

# Change sex

afquery update-db --db ./db/ --update-sample SAMP_001 --set-sex female

# Replace phenotype codes (replaces ALL current codes)

afquery update-db --db ./db/ --update-sample SAMP_001 --set-phenotype "E11.9,I10"

# Change both fields in one command

afquery update-db --db ./db/ \

--update-sample SAMP_001 \

--set-sex female \

--set-phenotype "E11.9,I10"

Batch update from TSV

Create a TSV file with a sample_name, field, new_value header. One change per row; the same sample can appear on multiple rows:

sample_name field new_value

SAMP_001 sex female

SAMP_002 phenotype_codes E11.9,I10

SAMP_003 sex male

SAMP_003 phenotype_codes C50

Operator note

Attach a free-text note to every changelog entry created by the update:

afquery update-db --db ./db/ \

--update-sample SAMP_001 \

--set-phenotype "E11.9" \

--operator-note "Corrected after clinical review 2026-03-19"

Verify the change

# Inspect the changelog

afquery info --db ./db/ --changelog

# List samples to confirm new values

afquery info --db ./db/ --samples

# Query with the updated phenotype

afquery query --db ./db/ --locus chr1:925952 --phenotype E11.9

Full Option Reference

See CLI Reference → update-db.

Next Steps

- Create a Database — initial database creation from a manifest

- Performance Tuning — thread and memory configuration for the build phase

- Multi-cohort Strategies — organizing and versioning databases across cohorts

Query Allele Frequencies

afquery query retrieves allele frequencies from the database. Three query modes are available: point, region, and batch.

Point Query

Query a single genomic position:

Filter to a specific alt allele (useful at multi-allelic sites):

Region Query

Query all variants in a genomic range:

The range is 1-based, inclusive on both ends.

Python API — multi-chromosome regions

To query variants across multiple regions (including different chromosomes)

in a single call, use query_region_multi:

from afquery import Database

db = Database("./db/")

regions = [

("chr1", 900000, 1000000),

("chr17", 41196311, 41277500),

]

results = db.query_region_multi(regions, phenotype=["E11.9"])

Results are returned in genomic order (chr1, chr2, …, chr22, chrX, chrY,

chrM). Overlapping regions are automatically deduplicated — each variant

appears at most once. Chromosome names are normalized, so "1" and "chr1"

are equivalent.

For querying specific variants across chromosomes, use query_batch_multi:

variants = [

("chr1", 925952, "G", "A"),

("chrX", 5000000, "A", "G"),

]

results = db.query_batch_multi(variants)

Results are returned in input order (by original index). Duplicate entries

are deduplicated per chromosome — if the same (chrom, pos, ref, alt) appears

more than once, only the first occurrence is included. Chromosome names are

normalized, so "1" and "chr1" are equivalent.

Batch Query

Query multiple positions at once from a file:

The input file is a headerless TSV with columns chrom pos [ref [alt]] (ref and alt are optional):

Batch queries support variants across multiple chromosomes in a single file.

Output Formats

text (default)

Human-readable, one block per variant:

chr1:925952 G>A AC=142 AN=2742 AF=0.0518 n_eligible=1371 N_HET=138 N_HOM_ALT=2 N_HOM_REF=1231 N_FAIL=0 N_NO_COVERAGE=0

tsv

Tab-separated, one row per variant, suitable for downstream processing:

chrom pos ref alt AC AN AF n_eligible N_HET N_HOM_ALT N_HOM_REF N_FAIL N_NO_COVERAGE

chr1 925952 G A 142 2742 0.051782 1371 138 2 1231 0 0

json

JSON array, one object per variant:

[

{

"chrom": "chr1",

"pos": 925952,

"ref": "G",

"alt": "A",

"AC": 142,

"AN": 2742,

"AF": 0.05178,

"n_eligible": 1371,

"N_HET": 138,

"N_HOM_ALT": 2,

"N_HOM_REF": 1231,

"N_FAIL": 0,

"N_NO_COVERAGE": 0

}

]

Coverage-Evidence Filters (no_coverage)

By default AFQuery counts every BED-covered sample without a variant call as

hom-ref. With standard variant-only VCFs that assumption can be wrong: a missing

position may simply mean the sample was not sequenced deeply enough at that locus.

Three optional flags let you trade hom-ref aggressiveness for confidence. Samples

that fall below a threshold are reported in N_NO_COVERAGE instead of N_HOM_REF

(they remain in eligible and AN, like N_FAIL).

| Flag | Meaning |

|---|---|

--min-pass K |

A partially-covered tech is valid for hom-ref at a position only if it has ≥K PASS carriers (het|hom). Otherwise its non-carrier samples move to N_NO_COVERAGE. |

--min-observed K |

Same as --min-pass, but counts any VCF entry (het\|hom\|fail). Useful when you want to include calls that failed FILTER as evidence the position was sequenced. |

--min-quality-evidence K |

Requires ≥K quality-passing carriers per partially-covered tech. Requires a database built with --min-dp, --min-gq, --min-qual, or --min-covered. |

--min-pass and --min-observed combine with AND (both must hold). Both

default to 0, which disables the gate.

afquery query --db ./db/ --locus chr1:925952 --min-pass 1

afquery query --db ./db/ --region chr1:900000-1000000 --min-observed 2 --min-pass 1

The genotype invariant becomes:

N_HET + N_HOM_ALT + N_HOM_REF + N_FAIL + N_NO_COVERAGE = n_eligible.

Fully-covered samples (those whose tech was registered without a BED) are

never affected. Carrier samples (het/hom/fail) are never moved to

N_NO_COVERAGE. See Coverage Evidence

for when to reach for each flag.

Sample Filtering

All query modes support the same filter options:

Filters compose with AND:

--phenotype E11.9 --sex female= female samples with E11.9

Multiple values for the same filter compose with OR:

--phenotype E11.9 --phenotype I10= samples with E11.9 OR I10

Exclude with ^ prefix:

--tech ^wes_v1= all technologies exceptwes_v1

See Sample Filtering for full syntax.

Results When AN=0

If all samples are excluded by your filters, the result will have AC=0, AN=0, and AF=None. This is expected behavior — it means no samples in the selected subgroup were eligible at that position (e.g., all WES samples and the position is not in their capture regions).

Comparing AF Across Subgroups

Run two queries and compare:

# Diabetic patients

afquery query --db ./db/ --locus chr1:925952 --phenotype E11.9 --format json

# Healthy controls (exclude diabetic)

afquery query --db ./db/ --locus chr1:925952 --phenotype ^E11.9 --format json

For systematic comparison across many variants, consider Bulk Export with --by-phenotype, or see Cohort Stratification for a worked multi-group comparison.

Full Option Reference

Next Steps

- Sample Filtering — full filter syntax for phenotype, sex, and technology

- Understanding Output — field definitions and special cases (AN=0, N_FAIL)

- Cohort Stratification — comparing AF across multiple groups systematically

Annotate a VCF

afquery annotate adds allele frequency information to an existing VCF file as INFO fields. It queries each variant in the VCF against the database and writes results inline.

Basic Usage

Added INFO Fields

| Field | Type | Number | Description |

|---|---|---|---|

AFQUERY_AC |

Integer | A (per ALT) | Allele count in eligible samples |

AFQUERY_AN |

Integer | 1 (per site) | Allele number (total alleles examined) |

AFQUERY_AF |

Float | A (per ALT) | Allele frequency (AC / AN) |

AFQUERY_N_HET |

Integer | A (per ALT) | Heterozygous sample count |

AFQUERY_N_HOM_ALT |

Integer | A (per ALT) | Homozygous alt sample count |

AFQUERY_N_HOM_REF |

Integer | A (per ALT) | Homozygous ref sample count |

AFQUERY_N_FAIL |

Integer | 1 (per site) | Eligible samples whose call had FILTER≠PASS. Excluded from AC, but still counted in AN. Mutually exclusive with N_HET/N_HOM_ALT/N_HOM_REF. |

AFQUERY_N_NO_COVERAGE |

Integer | A (per ALT) | Eligible samples whose tech lacks coverage evidence at this position. Excluded from N_HOM_REF to keep AC/AN conservative. Always 0 unless a coverage-evidence filter is active. See Coverage Evidence. |

Multi-allelic sites

Number=A fields have one value per ALT allele (comma-separated for multi-allelic sites). Number=1 fields are shared across all ALT alleles at the same position.

Sample Filtering

Annotate with a specific subgroup:

afquery annotate \

--db ./db/ \

--input variants.vcf \

--output annotated.vcf \

--phenotype E11.9 \

--sex female \

--tech wgs

The INFO fields will reflect AF computed only over the filtered sample set. This allows generating population-specific frequency tracks.

Parallelism

Annotation runs in parallel across variants. By default, all available CPU cores are used:

For large VCFs (100K+ variants), set --threads to the number of available cores.

Using Annotated Output

BCFtools

Filter variants with high AF:

Extract specific fields:

Python (pysam / cyvcf2)

import cyvcf2

vcf = cyvcf2.VCF("annotated.vcf")

for variant in vcf:

ac = variant.INFO.get("AFQUERY_AC")

an = variant.INFO.get("AFQUERY_AN")

af = variant.INFO.get("AFQUERY_AF")

print(f"{variant.CHROM}:{variant.POS} AC={ac} AN={an} AF={af}")

R (VariantAnnotation)

Full Option Reference

Next Steps

- Clinical Prioritization — filter annotated VCFs to retain only rare local variants

- Sample Filtering — annotate using subgroup-specific AF (phenotype, sex, tech)

- Understanding Output — what AFQUERY_AF/AC/AN/N_FAIL fields mean

Bulk Export (dump)

afquery dump exports allele frequency data to CSV. It supports filtering by region and disaggregating output by sex, technology, or phenotype group.

Basic Usage

Export all variants to stdout:

Write to a file:

AC > 0 filter

By default dump exports only variants with AC > 0. Variants at covered positions with no carriers are omitted. Use --all-variants to include AC=0 rows, or afquery query --locus to verify coverage at a specific position.

Filter by Region

Export a single chromosome:

Export a specific region:

Positions are 1-based, inclusive on both ends.

Sample Filtering

Apply the same sample filters as query:

afquery dump --db ./db/ \

--phenotype E11.9 \

--sex female \

--tech wgs \

--output diabetic_female_wgs.csv

Disaggregate Output

All three disaggregation modes work on the same principle: add stratified columns alongside the totals. They can be combined in a single command.

Base columns (always present):

N_NO_COVERAGE is always emitted but is 0 unless a coverage-evidence

filter is active (see Coverage Evidence).

Add separate columns for male and female:

Output columns:

Add separate columns per sequencing technology:

Output columns include AC_wgs, AN_wgs, AF_wgs, N_HET_wgs, N_HOM_ALT_wgs, N_HOM_REF_wgs, N_FAIL_wgs, N_NO_COVERAGE_wgs, AC_wes_v1, AN_wes_v1, etc. (one group of eight columns per registered technology).

Add separate columns for specific phenotype groups:

Output includes AC_E11.9, AN_E11.9, AF_E11.9, N_HET_E11.9, N_HOM_ALT_E11.9, N_HOM_REF_E11.9, N_FAIL_E11.9, N_NO_COVERAGE_E11.9, AC_I10, etc.

Parallelism

Use multiple threads for faster export:

Full Option Reference

See CLI Reference → dump.

Next Steps

- Cohort Stratification — systematic cross-group AF comparison on specific loci

- Sample Filtering — filter syntax shared across query, annotate, and dump

- Performance Tuning — thread tuning for large export jobs

Variant Info

afquery variant-info returns the list of samples carrying a specific variant, together with each sample's metadata: sex, sequencing technology, phenotype codes, genotype (het/hom), and FILTER status (PASS/FAIL).

This avoids the need to re-query raw VCF files when inspecting individual variant carriers.

Basic usage

Tip

variant-info is the natural next step after query — once you find a variant of interest, use it to see which specific samples carry it.

By default all samples are queried and results are printed as an aligned text table:

sample_id sample_name sex tech phenotypes genotype filter

--------- ----------- ------ --------- ------------ -------- ------

3 P003 male WGS E11.9,J45 het PASS

17 P017 female WES_kit_A E11.9 hom PASS

42 P042 male WGS I10 alt FAIL

Filtering to a specific allele

When multiple alleles exist at the same position, use --ref and --alt to restrict to one:

Note

Without --ref/--alt, carriers for all alleles at the locus are returned and a warning is emitted if more than one allele is found. Specify both flags to disambiguate at multi-allelic sites.

Sample filters

variant-info accepts the same sample filters as query:

# Only female carriers

afquery variant-info --db ./db/ --locus chr1:925952 --sex female

# Only carriers with phenotype E11.9

afquery variant-info --db ./db/ --locus chr1:925952 --phenotype E11.9

# Exclude phenotype I10

afquery variant-info --db ./db/ --locus chr1:925952 --phenotype ^I10

# Restrict to WGS samples

afquery variant-info --db ./db/ --locus chr1:925952 --tech WGS

# Combine filters

afquery variant-info --db ./db/ --locus chr1:925952 \

--sex female --phenotype E11.9 --tech WGS,WES_kit_A

See Sample Filtering for the full filter syntax.

Output formats

TSV

Machine-readable tab-separated output, suitable for downstream processing:

sample_id sample_name sex tech phenotypes genotype filter

3 P003 male WGS E11.9,J45 het PASS

17 P017 female WES_kit_A E11.9 hom PASS

42 P042 male WGS I10 alt FAIL

JSON

Structured output with variant metadata and a sample list:

{

"variant": {

"chrom": "chr1",

"pos": 925952,

"ref": ".",

"alt": "."

},

"samples": [

{

"sample_id": 3,

"sample_name": "P003",

"sex": "male",

"tech": "WGS",

"phenotypes": ["E11.9", "J45"],

"genotype": "het",

"filter": "PASS"

},

{

"sample_id": 42,

"sample_name": "P042",

"sex": "male",

"tech": "WGS",

"phenotypes": ["I10"],

"genotype": "alt",

"filter": "FAIL"

}

]

}

When --ref and --alt are specified, the variant block contains the actual alleles. Otherwise, "." is used as a placeholder.

Genotype values

| Value | Meaning |

|---|---|

het |

Heterozygous carrier, FILTER=PASS |

hom |

Homozygous alt carrier, FILTER=PASS |

alt |

Non-ref carrier with FILTER≠PASS (ploidy unknown) |

no_coverage |

Sample's tech lacks coverage evidence at this position; not a carrier. Only appears when a coverage-evidence filter (--min-pass, --min-observed, --min-quality-evidence, or build-time --min-covered) is active. The FILTER column is empty (text/tsv) or null (JSON) — PASS/FAIL does not apply because there is no call. See Coverage Evidence. |

All options

| Option | Default | Description |

|---|---|---|

--db |

required | Path to database directory |

--locus |

required | CHROM:POS (e.g. chr1:925952) |

--ref |

— | Filter to specific reference allele |

--alt |

— | Filter to specific alternate allele |

--phenotype |

all | Include phenotype (repeatable; ^CODE excludes) |

--sex |

both |

male, female, or both |

--tech |

all | Include technology (repeatable; ^NAME excludes) |

--format |

text |

text, tsv, or json |

--no-warn |

off | Suppress AfqueryWarning messages |

See also CLI Reference → variant-info.

Next Steps

- Sample Filtering — full filter syntax for phenotype, sex, and technology

- Understanding Output — field definitions and special cases

- FILTER=PASS Tracking — understanding FAIL genotypes

- Python API → variant_info — programmatic access

- ACMG Criteria — using carrier info for variant classification

Sample Filtering

AFQuery supports flexible metadata-based selection of samples for AF computation. Filters are available on all query commands (query, annotate, dump, variant-info).

Phenotype codes are arbitrary string labels — you define them in your manifest. They can be ICD-10 codes, HPO terms, project tags (control, pilot), or any strings meaningful to your cohort. The filtering system does not interpret the codes — it matches them exactly as stored.

Filter Dimensions

Three independent filter dimensions are supported:

| Dimension | Flag | Default |

|---|---|---|

| Sex | --sex |

both |

| Phenotype | --phenotype |

all samples |

| Technology | --tech |

all samples |

Filters compose with AND across dimensions: a sample must satisfy all active filters to be eligible.

Filtering Flow

graph TD

A["Sample Pool<br/>300 samples total"]

B["Sex Filter<br/>female"]

C["Phenotype Filter<br/>E11.9 OR I10"]

D["Tech Filter<br/>wgs"]

E["Eligible Set<br/>42 samples"]

A -->|--sex female| B

B -->|200 samples| C

C -->|--phenotype E11.9,I10| D

D -->|--tech wgs| E

style A fill:#e3f2fd

style B fill:#f3e5f5

style C fill:#fff3e0

style D fill:#e8f5e9

style E fill:#fce4ecSex Filter

--sex female # only female samples

--sex male # only male samples

--sex both # all samples (default)

The sex filter affects AN computation on sex chromosomes (chrX/chrY/chrM). See Ploidy & Sex Chromosomes.

Phenotype Filter

Include a Single Code

Include Multiple Codes (OR logic)

Repeat the flag or use comma-separated values:

--phenotype E11.9 --phenotype I10 # samples with E11.9 OR I10

--phenotype E11.9,I10 # equivalent shorthand

Exclude Codes

Prefix with ^:

Combine Include and Exclude

No Phenotype Filter (default)

Omitting --phenotype includes all samples regardless of phenotype.

Pseudo-control Queries

A common pattern is computing AF over all samples except a specific group, effectively using the rest of the cohort as controls:

# All samples except those tagged with cardiomyopathy ICD code

afquery query --db ./db/ --locus chr1:925952 --phenotype ^I42

# All samples except those with a specific project tag

afquery query --db ./db/ --locus chr1:925952 --phenotype ^pilot_cohort

See Use Cases: Pseudo-controls for a full worked example.

Technology Filter

Naming conventions

In the documentation, "WGS" (uppercase) refers to whole-genome sequencing as a concept. In CLI examples, wgs (lowercase) is used as the manifest technology name. Both work — the WGS check is case-insensitive. All other technology names are case-sensitive.

Include a Technology

Include Multiple Technologies (OR logic)

Exclude a Technology

Composing Filters

Filters across dimensions require a sample to satisfy all conditions:

This selects samples that are:

- Female AND

- Have phenotype code E11.9 AND

- Use WGS technology

Effect on AN

AN is computed only over eligible samples. When filters are applied:

- Samples excluded by phenotype/sex/tech do not contribute to AN

- WES samples at positions outside their capture regions do not contribute to AN

- A result with

AN=0means no eligible samples at that position

Edge Cases

| Scenario | Result |

|---|---|

| No samples match include filter | AC=0, AN=0, AF=None |

| Include and exclude same code | AC=0, AN=0, AF=None (empty intersection) |

| WES-only tech at WGS-only position | AC=0, AN=0, AF=None |

| All samples excluded | AC=0, AN=0, AF=None |

Using Filters in the Python API

from afquery import Database

db = Database("./db/")

# Female WGS samples with the E11.9 phenotype code

results = db.query(

chrom="chr1",

pos=925952,

phenotype=["E11.9"],

sex="female",

tech=["wgs"],

)

# Exclude wes_v1

results = db.query(

chrom="chr1",

pos=925952,

tech=["^wes_v1"],

)

The ^ prefix exclusion syntax works the same in the Python API as in the CLI.

Next Steps

- Query Allele Frequencies — apply filters in point, region, and batch queries

- Cohort Stratification — systematic multi-group AF comparison

- Pseudo-controls — exclusion-based background frequency for case enrichment

🏥 Clinical Workflows

ACMG Criteria: BA1, PM2, and PS4

AFQuery enables evidence-based ACMG/AMP variant classification using allele frequencies computed on your own cohort. This page shows how to apply the three AF-dependent ACMG criteria — BA1, PM2, and PS4 — with AFQuery commands and Python examples.

AFQuery supplements population databases

Local cohort AF provides complementary evidence to reference population databases like gnomAD. Use both: gnomAD for global population context, AFQuery for local cohort context.

BA1 — Stand-Alone Benign

Definition: Allele frequency > 5% in any population → variant is benign (stand-alone evidence).

When Local AF Matters for BA1